Reportedly, the Danish RKI register for people who cannot pay their bills get a least one request a week from someone who wants to be added to their files (article in Danish).

The applicants do not trust their future selves to resist the temptation of a quick loan.

I find this deep skepticism towards one’s future abilities to control one’s own behavior quite fascinating. In strategic situations with more than one player it is often a smart move to limit one’s own options (as in the oft-mentioned example of visible tearing out one’s steering wheel in a game of “Chicken”). But we also constantly limit the options of our future selves when there’s no-one else involved.

As Daniel Gilbert puts it in his book Stumbling on Happiness we spend much of our time attempting to make sure that our future selves will be happy (and Gilbert’s point is that we are often quite wrong). But we clearly also make an effort to ensure that our future selves at some point will be unhappy (e.g. when we cannot get that loan) so that our even-more-future self will be happy (when we come to our senses). It must be quite complicated being us.

Tag: Tillid

Interesting article on player rating

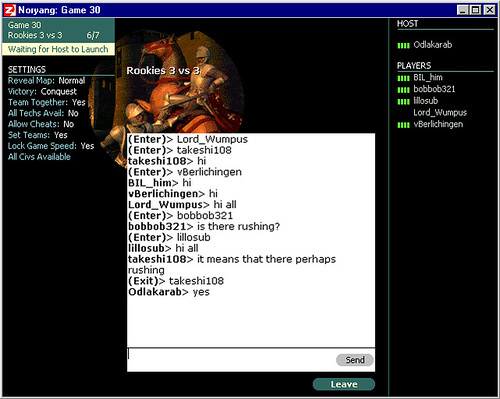

I rambled a bit below on player rating systems. On that note we have a (much more) interesting new article on Game Research about Trust, Cooperation, and Reputation in Massively Multiplayer Online Games by Tony Tulathimutte.

powered by performancing firefox

Player rating systems

Peer-2-peer rating systems work quite well in systems like eBay and Slashdot.

But they do so because User A has no real interest in User B’s future. In brief: A has no real reason to be dishonest.

Not so for online gamers. Here a rating can be used strategically. You may get angry with someone, but you may also see a personal advantage (in terms of relative score) in bad-mouthing that person.

Thus, we essentially need a system which can

- distinguish between fair and unfair ratings OR

- in which ratings cannot be used strategically

But what on Earth, I ask you, would that look like?

What about (just brainstorming):

- Limited number of total karma points (you can’t just toss them aroud for the heck of it)

- A player can only give a limited amount of points to any given player (to minimize the consequence of evil ratings)

- The rating is given secretively (you can’t trust/threaten someone to be nice if you are)

- Karma points cannot be given at all until you’re fairly high-level (or whatever) to avoid people making dummy accounts to boost their own rating

- You only see ratings of your friends (or those you’ve rated positively) so the truthfulness of your rating actually affects your friends

Any good ideas?

Communication: The act of asssuring a listener that the speaker would be harsh to other people

I find signalling (as in signalling theory) quite fascinating. Basically, individuals sometimes want to communicate their possession of a certain trait (in the broadest sense).

Anyway, the other day I called my bank to have my credit card re-activated (don’t ask).

I give my name, state the problem and ask the lady to fix things. She says “sure”, and then apparently remembers that there is a possible security issue. She then says (admittedly among other things): “Just to make absolutely sure you are who you say, let’s do a few check questions… Did you recently have an amount inserted by a ‘university’?”. “Oh yes, that’s correct”, I confirm. Duh! She was clearly torn between not wanting to offend (“I’m not sure you are who you say you are”) and not wanting me to think that she would let others call in and impersonate me. So the question came out as a weak compromise and she ran the checks not to actually make sure I was me, but to signal to me that she would not just let anyone call in and manage my bank affairs. Either way, it was kind of funny*.

* And reminded me of the Danish TV sketch where a hot female student is taking a chemistry exam. The examiner goes “Were you aware that Sulphuric Acid has the molecular formula H2SO4?”, to which the girl goes “Yes I was”, which utterly impresses the examiner: “Wow, that’s way beyond the curriculum!”.

Trusting those trusted by someone I trust

In my master’s thesis on The Architectures of Trust (2MB) I discussed techniques for establishing trust among strangers in online communities such as virtual worlds (around page 61). I said things like:

Another possibility is to give users access to the implicit verdicts of friends. Systems that employ buddy lists (see Figure 3) may let users see the verdicts of their ‘buddies’. For instance, Bob is wondering whether to trust Alice, but being on the buddy list of Eve, he is entitled to the information that Alice is also on the list. Since Bob trusts Eve and Eve trusts Alice, Bob can trust Alice. Such features provide internal verification.

Although the topic of reputation systems in game worlds has been discussed since the dawn of code, implementations have been rare. One reason is that gamers often have an interest in undermining the system (an incentive far more modest in systems like eBay). But recently MonkeyModulator announced the near-future arrival of an interesting WoW add-on enabling players to evaluate each other and to share these evaluations.

I’d be quite interested in news on how that works out for you WoW die-hards.

Via TerraNova